Our exploration of set theory last time was a precursor to discussing dilation and erosion. Simply put, dilation makes things bigger and erosion makes things smaller. Seems obvious right? Let’s see if the mathematicians can muck it up.

Translation

This is not necessarily a fundamental set operation, but it is critical for what we will be doing. Translation can be described as shifting the pixels in the image. We can move it in both the x and y directions and we will represent the amount of movement using a point. To translate the set then, we simply add that point to every element of the set. For instance, using the point (20, 20) the puzzle is moved down 20 pixels and right 20 pixels like this:

The light blue area marks the original location.

Dilation

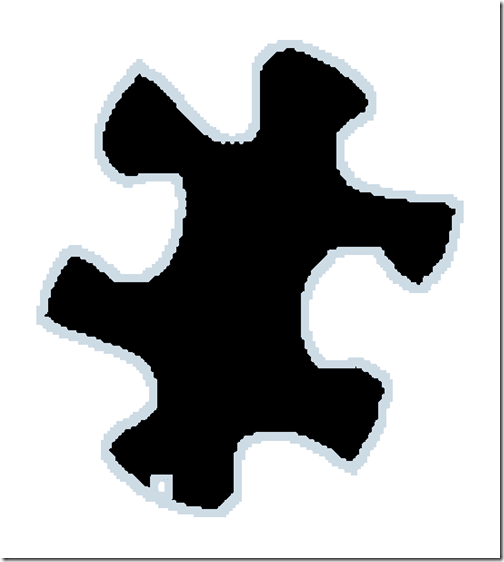

Dilation is an extension of translation from a point to a set of points. If you union the sets created by each translation you end up with dilation. In the following diagram, the black pixels represent the original puzzle piece. The light blue halo represents several translations of the original. Each translation is translucent so I have tried to make the image large enough so you can see the overlap. Notice how the hole is missing?

The set of points that were used to create the translated sets is called the structuring element. A common structuring element is

{ (-1,-1), (0,-1), (1,-1),(-1,0), (0,0), (1,0),(-1,1), (0,1), (1,1) }

This will expand the image by one pixel in every direction. If we think about this as a binary image, it might be represented by this:

Kinda reminds me of one of our convolution kernels. Structuring elements are often called “probes”. This one is centered at (0,0).

Using the PixelSet we created last time, the code is pretty straightforward.

public void Dilate(PixelSet structuringElem)

{

PixelSet dilatedSet = new PixelSet();

foreach(int translateY in structuringElem._pixels.Keys)

{

foreach(int translateX in structuringElem._pixels[translateY])

{

PixelSet translatedSet = new PixelSet();

foreach (int y in _pixels.Keys)

{

foreach(int x in _pixels[y])

{

translatedSet.Add(x + translateX, y + translateY);

}

}

dilatedSet.Union(translatedSet);

}

}

_pixels = new Dictionary<int,List<int>>(dilatedSet._pixels);

}

Here is the puzzle piece dilated by one pixel in all directions. The hole is gone.

Erosion

Erosion is similar to dilation except that our translation is subtraction instead of addition and we are finding the intersection instead of the union. Here is an image that has been eroded by 10 pixels in all directions. The light blue area is the original puzzle.

public void Erode(PixelSet structuringElem)

{

PixelSet erodedSet = new PixelSet(this);

foreach (int translateY in structuringElem._pixels.Keys)

{

foreach (int translateX in structuringElem._pixels[translateY])

{

PixelSet translatedSet = new PixelSet();

foreach (int y in _pixels.Keys)

{

foreach (int x in _pixels[y])

{

translatedSet.Add(x – translateX, y – translateY);

}

}

erodedSet.Intersect(translatedSet);

}

}

_pixels = new Dictionary<int,List<int>>(erodedSet._pixels);

}

Here is the puzzle eroded by one pixel in all directions. That hole is a little bigger.

Summary

Like I said at the beginning, dilation makes things bigger and erosion makes things smaller. Interestingly, erosion is simply the dilation of the background and dilation is the erosion of the background.

Download Code

Up Next: Opening and Closing

When you are driving down a mountain you might see a sign (like the one to the right) indicating a steep grade. The grade in the context of a mathematical function is no different. We are trying to find the slope of the function at a given point. This is called the gradient.

When you are driving down a mountain you might see a sign (like the one to the right) indicating a steep grade. The grade in the context of a mathematical function is no different. We are trying to find the slope of the function at a given point. This is called the gradient.